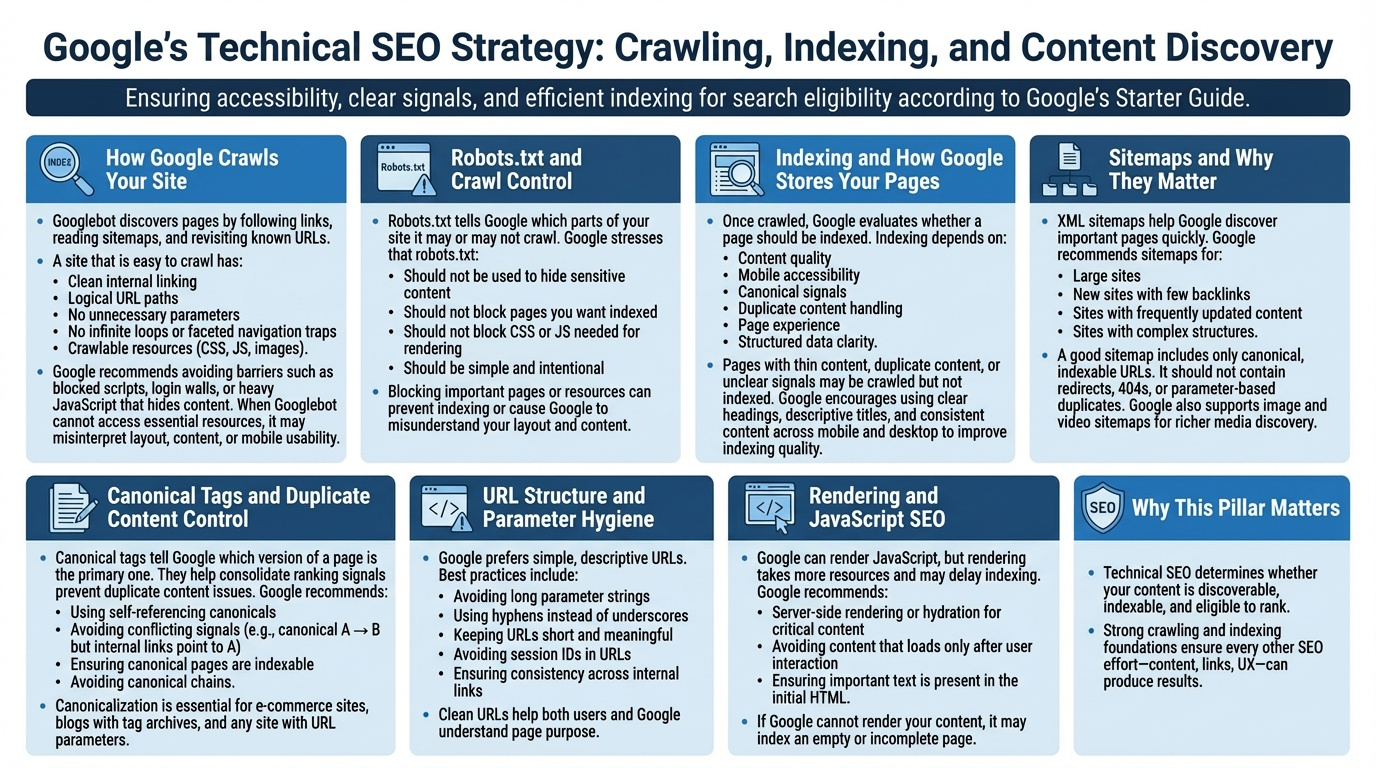

Technical SEO ensures Google can access, understand, and store your content. Google’s SEO Starter Guide emphasizes that even the best content cannot rank if Googlebot cannot crawl it or if indexing signals are unclear. This pillar covers the essential systems—crawling, indexing, sitemaps, robots.txt, canonicalization, and URL hygiene—that determine whether your pages are eligible to appear in search at all.

How Google Crawls Your Site

Googlebot discovers pages by following links, reading sitemaps, and revisiting known URLs. A site that is easy to crawl has:

- Clean internal linking

- Logical URL paths

- No unnecessary parameters

- No infinite loops or faceted navigation traps

- Crawlable resources (CSS, JS, images)

Google recommends avoiding barriers such as blocked scripts, login walls, or heavy JavaScript that hides content. When Googlebot cannot access essential resources, it may misinterpret layout, content, or mobile usability.

Robots.txt and Crawl Control

Robots.txt tells Google which parts of your site it may or may not crawl. Google stresses that robots.txt:

- Should not be used to hide sensitive content

- Should not block pages you want indexed

- Should not block CSS or JS needed for rendering

- Should be simple and intentional

Blocking important pages or resources can prevent indexing or cause Google to misunderstand your layout and content.

Indexing and How Google Stores Your Pages

Once crawled, Google evaluates whether a page should be indexed. Indexing depends on:

- Content quality

- Mobile accessibility

- Canonical signals

- Duplicate content handling

- Page experience

- Structured data clarity

Pages with thin content, duplicate content, or unclear signals may be crawled but not indexed. Google encourages using clear headings, descriptive titles, and consistent content across mobile and desktop to improve indexing quality.

Sitemaps and Why They Matter

XML sitemaps help Google discover important pages quickly. Google recommends sitemaps for:

- Large sites

- New sites with few backlinks

- Sites with frequently updated content

- Sites with complex structures

A good sitemap includes only canonical, indexable URLs. It should not contain redirects, 404s, or parameter‑based duplicates. Google also supports image and video sitemaps for richer media discovery.

Canonical Tags and Duplicate Content Control

Canonical tags tell Google which version of a page is the primary one. They help consolidate ranking signals and prevent duplicate content issues. Google recommends:

- Using self‑referencing canonicals

- Avoiding conflicting signals (e.g., canonical A → B but internal links point to A)

- Ensuring canonical pages are indexable

- Avoiding canonical chains

Canonicalization is essential for e‑commerce sites, blogs with tag archives, and any site with URL parameters.

URL Structure and Parameter Hygiene

Google prefers simple, descriptive URLs. Best practices include:

- Avoiding long parameter strings

- Using hyphens instead of underscores

- Keeping URLs short and meaningful

- Avoiding session IDs in URLs

- Ensuring consistency across internal links

Clean URLs help both users and Google understand page purpose.

Rendering and JavaScript SEO

Google can render JavaScript, but rendering takes more resources and may delay indexing. Google recommends:

- Server‑side rendering or hydration for critical content

- Avoiding content that loads only after user interaction

- Ensuring important text is present in the initial HTML

If Google cannot render your content, it may index an empty or incomplete page.

Why This Pillar Matters

Technical SEO determines whether your content is discoverable, indexable, and eligible to rank. Strong crawling and indexing foundations ensure every other SEO effort—content, links, UX—can produce results.